Can we Trust Zero Trust?

What AI Changes About Security Boundaries

Zero trust has become one of those phrases that feels both unavoidable and unquestionable. It shows up in board decks, vendor roadmaps, and architectural diagrams as a design philosophy. “Never trust, always verify” sounds timeless, almost axiomatic. But axioms only hold within the worlds they were built for.

What I find myself wondering lately isn’t whether zero trust is wrong, but whether we’ve kept its definition fixed while the environment around it has changed. The assumptions that shaped zero trust were grounded in a very different operational reality, and the systems we’re building now behave in ways those assumptions never had to account for. To understand how zero trust needs to evolve, it helps to look at the world it was built for and the one we’re applying it to today.

The World Zero Trust Was Built For

When I look at this diagram, I’m reminded of how comforting traditional network security used to feel. There was an outside and an inside, and the line between the two mattered. The internet sat on one side, the enterprise lived on the other, and everything meaningful flowed through a small number of very intentional choke points. If we could see those choke points, control them, and monitor them, we had a handle on risk.

The next generation firewall was the star of that world. It was the place where intent was inspected, policies were enforced, and trust was withheld. North–south traffic came was scrutinized, decrypted if necessary, and either allowed or dropped. East–west traffic stayed largely contained within known segments. We knew where the endpoints were, what servers lived where, and which routes were acceptable. The network topology told a story we could reason about.

Defense-in-depth made sense. Wach layer had a defined role. Endpoints carried anti-malware, EDR, and DLP to catch what slipped through. Servers ran agents that watched processes and file access. Network devices enforced segmentation and limited blast radius. Data lived close to the systems that used it, and when it moved, it followed paths we could diagram, document, and defend. Even when something went wrong, we could usually reconstruct the sequence of events by walking the flow in reverse.

This model wasn’t perfect, and it accumulated plenty of technical debt over time, but it was legible. Systems did what they were built to do. Data moved where it was explicitly allowed to move. Trust was reduced, verified, and continuously re-evaluated at boundaries we understood. Zero trust, in this context was natural evolution of everything we already believed about networks - tighter controls, better visibility, and fewer assumptions.

The World that we live in now

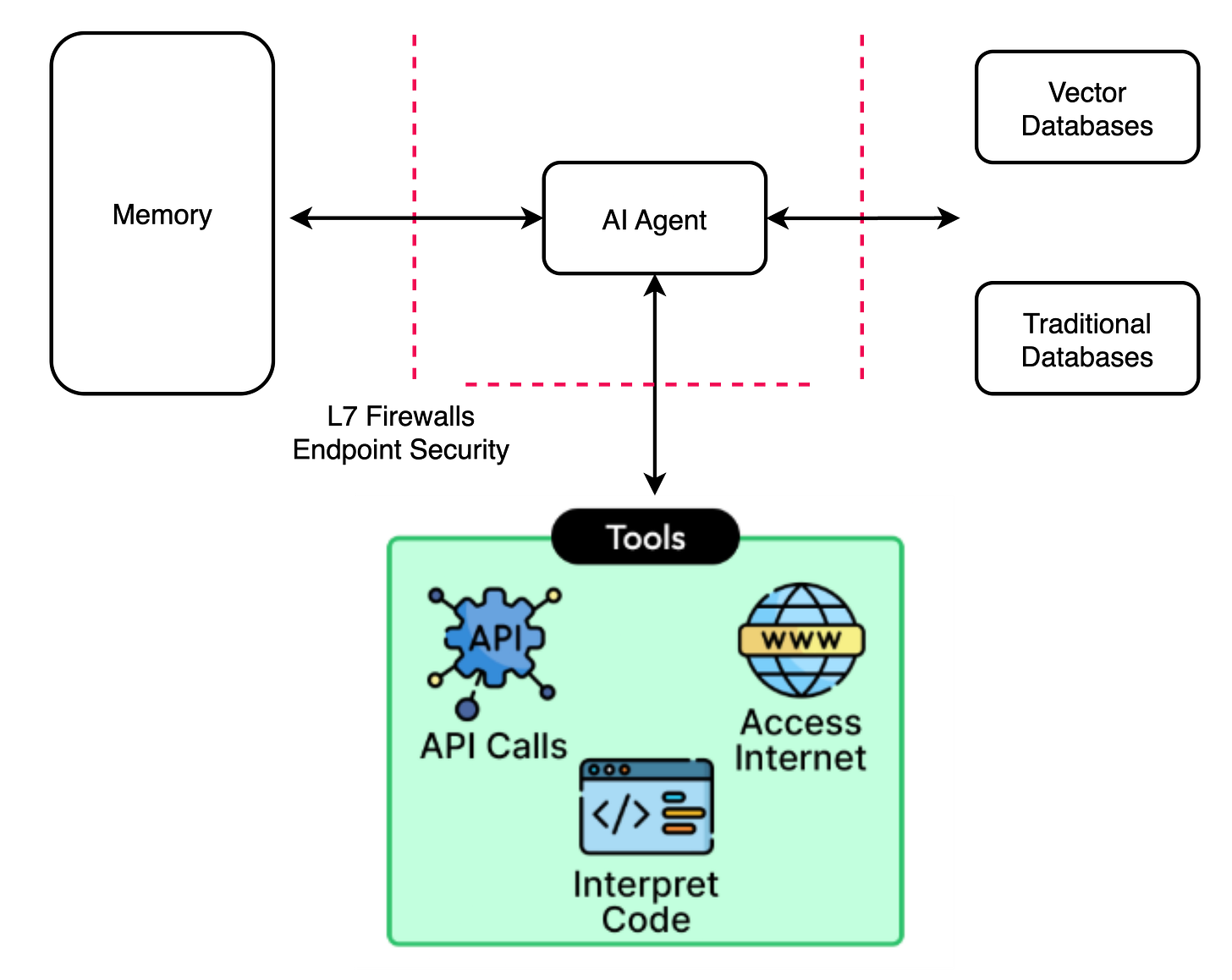

Compare this to today’s world. There is no longer an obvious perimeter where trust decisions naturally belong. Instead of traffic flowing between well-defined zones, everything seems to orbit around the AI agent itself. It becomes the focal point where data, context, and intent converge, and that alone changes how we think about control.

The agent doesn’t just consume data the way applications used to. It pulls from memory, queries vector databases, reaches into traditional data stores, and then decides what to do next based on what it learns. Those decisions aren’t hardcoded paths we can map ahead of time; they’re shaped in real time by prompts, intermediate results, and evolving goals. The system is no longer following a script but reasoning its way forward.

What unsettles me most is how action happens. The agent doesn’t need broad, direct access to everything. It operates through tools that already have permissions: APIs, code execution environments, and even direct access to the internet. Each tool call can be perfectly valid and policy-compliant on its own, inspected by L7 controls and endpoint security, yet the chain of actions they form can produce outcomes we never explicitly designed or approved.

This is where the old zero trust instincts start to fray. Trust isn’t violated at a single boundary but accumulated across many small, legitimate decisions. Data doesn’t obviously “move” so much as it gets reassembled through delegation. The model is still layered and controlled, but it’s no longer fully legible. In this world, zero trust doesn’t feel wrong but it feels incomplete in ways we didn’t have to confront before.

Trust Without a Choke Point

Zero trust was never just about mistrust. It was about placing trust carefully at points where it could be verified, constrained, and revoked. The problem we face now isn’t that we’ve abandoned those principles, but that the places where trust naturally accumulates have shifted.

When reasoning systems act through chains of delegation trust becomes emergent rather than explicit. When intent is inferred rather than declared, verification becomes probabilistic. And when outcomes arise from composition instead of design, the old question “Where do we enforce?” - no longer has a clean answer.

So can we trust zero trust? Maybe the better question is whether zero trust can evolve fast enough to remain meaningful in systems that no longer have clear choke points, stable intent, or legible flows. If we keep applying it as if nothing fundamental has changed, we risk mistaking policy compliance for control—and visibility for understanding.

Zero trust still matters. But trusting it blindly might be the one thing it was never meant to allow.

Really enjoyed this... especially the point that AI breaks the traditional idea of where trust “belongs.” What you describe is exactly what we’re seeing in agentic systems: the flow of action no longer follows predictable, network-visible paths. It gets reshaped in real time by tools, APIs, and delegated capabilities. That makes conventional Zero Trust enforcement - tied to ports, routes, or perimeter choke points - far less meaningful.

Where I’d push the conversation is this... AI hasn’t invalidated Zero Trust, but it has invalidated its network-centric implementation.

Your diagrams show the same pattern: the agent becomes the new centre of gravity, and trust is reconstructed across chains of delegation. That’s the exact failure mode in traditional networking models - they assume fixed topology and human-paced workflows. AI operates across domains, clouds, and toolchains at machine speed.

The evolution we need is identity-first, authenticated-before-connect overlays where:

- trust is bound to workloads, agents, and tools, not IP space

- every action is evaluated at the service or API level, not the subnet

- zero-inbound connectivity removes the “implicit reachability” problem

- lateral movement disappears structurally rather than being mitigated

- audit and policy follow the agent across boundaries, not the network

In other words: Zero Trust still holds - but the network is no longer the place to enforce it.

AI forces us to shift ZT upward into an identity + policy + overlay connectivity layer, where reasoning systems and delegated actions can be constrained without relying on the old choke points you rightly point out are disappearing.

Your conclusion is spot on: trusting ZT blindly is dangerous. But abandoning it would be worse.

We just need to implement it where AI actually lives - not where networks used to.

If you are interested in this more, we are currently starting to work on a paper in the Cloud Security Alliance on it, essentially Agentic AI/MCP and Zero Trust (connectivity).